Upload folder using huggingface_hub

Browse files- README.md +33 -6

- chat_template.json +3 -0

- config.json +250 -5

- generation_config.json +5 -4

- model-00001-of-00002.safetensors +2 -2

- model-00002-of-00002.safetensors +2 -2

- model.safetensors.index.json +0 -0

README.md

CHANGED

|

@@ -1,11 +1,17 @@

|

|

| 1 |

---

|

| 2 |

tags:

|

| 3 |

- unsloth

|

| 4 |

-

base_model:

|

| 5 |

-

- Qwen/Qwen3-VL-4B-Instruct-FP8

|

| 6 |

license: apache-2.0

|

| 7 |

pipeline_tag: image-text-to-text

|

|

|

|

|

|

|

|

|

|

|

|

|

| 8 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

| 9 |

<div>

|

| 10 |

<p style="margin-top: 0;margin-bottom: 0;">

|

| 11 |

<em><a href="https://docs.unsloth.ai/basics/unsloth-dynamic-v2.0-gguf">Unsloth Dynamic 2.0</a> achieves superior accuracy & outperforms other leading quants.</em>

|

|

@@ -29,7 +35,7 @@ pipeline_tag: image-text-to-text

|

|

| 29 |

|

| 30 |

# Qwen3-VL-4B-Instruct-FP8

|

| 31 |

|

| 32 |

-

> This repository contains an FP8 quantized version of the [Qwen3-VL-4B-Instruct

|

| 33 |

|

| 34 |

|

| 35 |

Meet Qwen3-VL — the most powerful vision-language model in the Qwen series to date.

|

|

@@ -81,11 +87,10 @@ This is the weight repository for Qwen3-VL-4B-Instruct-FP8.

|

|

| 81 |

|

| 82 |

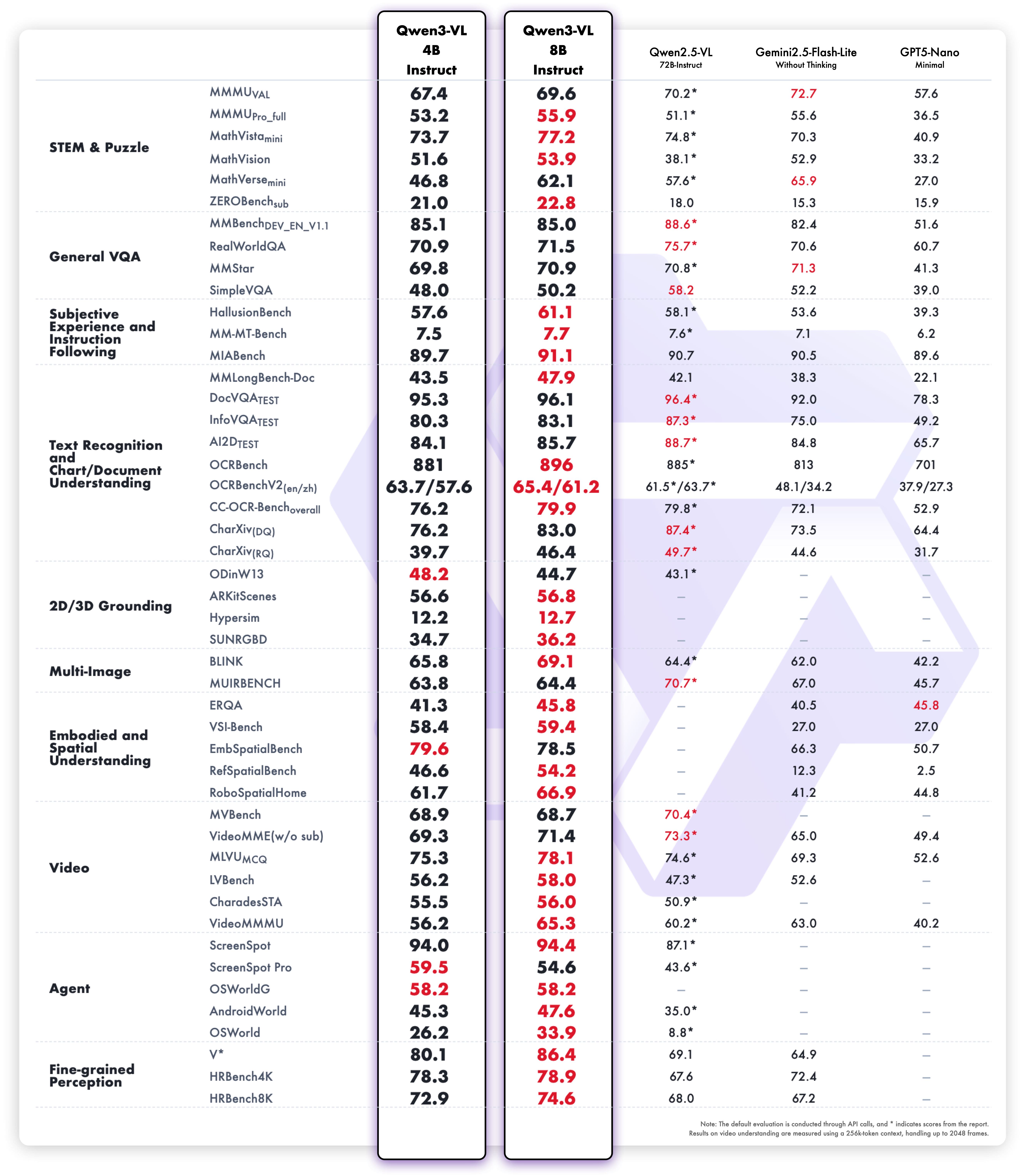

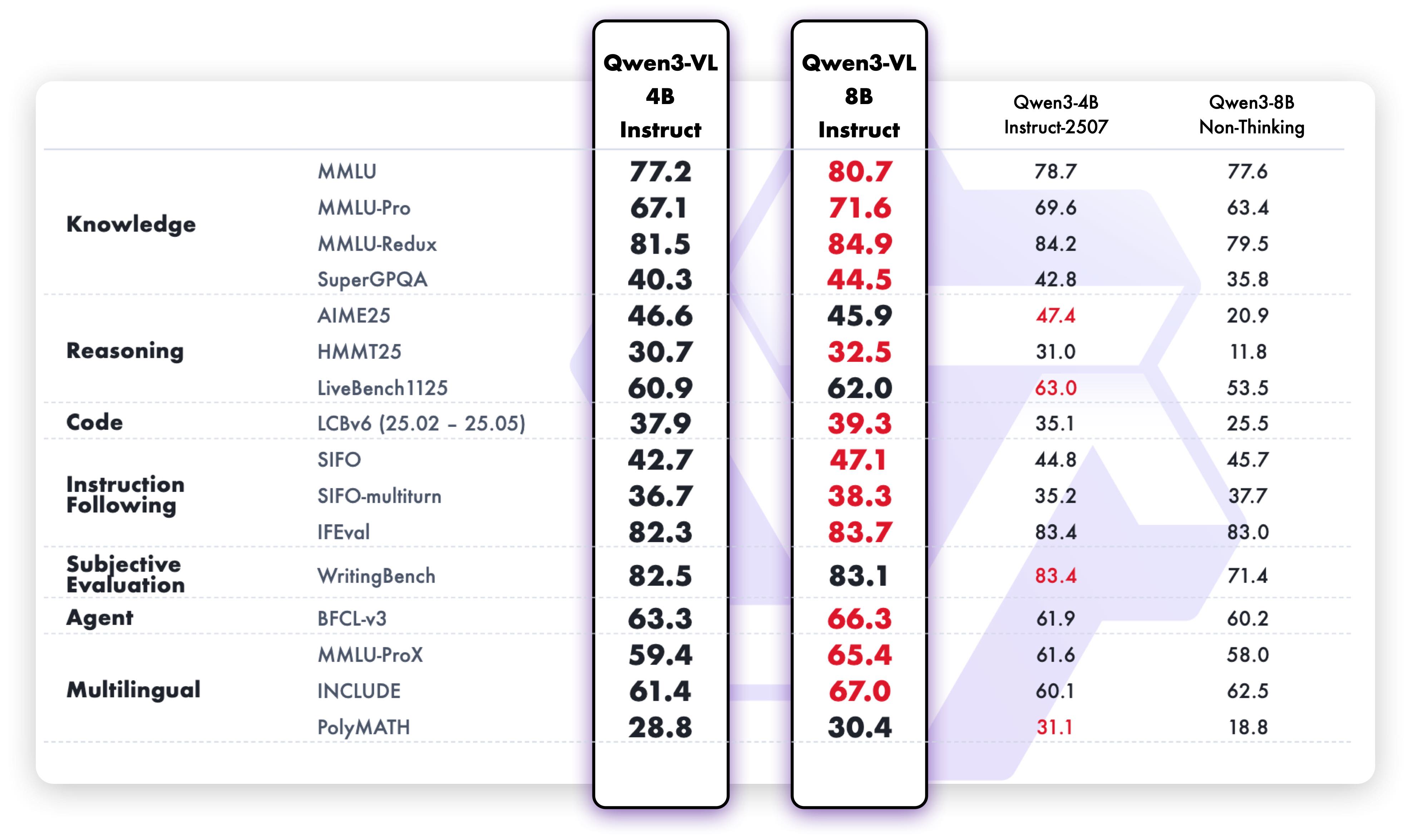

**Multimodal performance**

|

| 83 |

|

| 84 |

-

model. The quantization method is fine-grained fp8 quantization with block size of 128, and its performance metrics are nearly identical to those of the original BF16 model. Enjoy!

|

| 39 |

|

| 40 |

|

| 41 |

Meet Qwen3-VL — the most powerful vision-language model in the Qwen series to date.

|

|

|

|

| 87 |

|

| 88 |

**Multimodal performance**

|

| 89 |

|

| 90 |

+

|

| 91 |

|

| 92 |

**Pure text performance**

|

| 93 |

+

|

|

|

|

| 94 |

|

| 95 |

## Quickstart

|

| 96 |

|

|

|

|

| 251 |

```

|

| 252 |

|

| 253 |

|

| 254 |

+

### Generation Hyperparameters

|

| 255 |

+

#### VL

|

| 256 |

+

```bash

|

| 257 |

+

export greedy='false'

|

| 258 |

+

export top_p=0.8

|

| 259 |

+

export top_k=20

|

| 260 |

+

export temperature=0.7

|

| 261 |

+

export repetition_penalty=1.0

|

| 262 |

+

export presence_penalty=1.5

|

| 263 |

+

export out_seq_length=16384

|

| 264 |

+

```

|

| 265 |

+

|

| 266 |

+

#### Text

|

| 267 |

+

```bash

|

| 268 |

+

export greedy='false'

|

| 269 |

+

export top_p=1.0

|

| 270 |

+

export top_k=40

|

| 271 |

+

export repetition_penalty=1.0

|

| 272 |

+

export presence_penalty=2.0

|

| 273 |

+

export temperature=1.0

|

| 274 |

+

export out_seq_length=32768

|

| 275 |

+

```

|

| 276 |

|

| 277 |

|

| 278 |

## Citation

|

chat_template.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"chat_template": "{%- if tools %}\n {{- '<|im_start|>system\\n' }}\n {%- if messages[0].role == 'system' %}\n {%- if messages[0].content is string %}\n {{- messages[0].content }}\n {%- else %}\n {%- for content in messages[0].content %}\n {%- if 'text' in content %}\n {{- content.text }}\n {%- endif %}\n {%- endfor %}\n {%- endif %}\n {{- '\\n\\n' }}\n {%- endif %}\n {{- \"# Tools\\n\\nYou may call one or more functions to assist with the user query.\\n\\nYou are provided with function signatures within <tools></tools> XML tags:\\n<tools>\" }}\n {%- for tool in tools %}\n {{- \"\\n\" }}\n {{- tool | tojson }}\n {%- endfor %}\n {{- \"\\n</tools>\\n\\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\\n<tool_call>\\n{\\\"name\\\": <function-name>, \\\"arguments\\\": <args-json-object>}\\n</tool_call><|im_end|>\\n\" }}\n{%- else %}\n {%- if messages[0].role == 'system' %}\n {{- '<|im_start|>system\\n' }}\n {%- if messages[0].content is string %}\n {{- messages[0].content }}\n {%- else %}\n {%- for content in messages[0].content %}\n {%- if 'text' in content %}\n {{- content.text }}\n {%- endif %}\n {%- endfor %}\n {%- endif %}\n {{- '<|im_end|>\\n' }}\n {%- endif %}\n{%- endif %}\n{%- set image_count = namespace(value=0) %}\n{%- set video_count = namespace(value=0) %}\n{%- for message in messages %}\n {%- if message.role == \"user\" %}\n {{- '<|im_start|>' + message.role + '\\n' }}\n {%- if message.content is string %}\n {{- message.content }}\n {%- else %}\n {%- for content in message.content %}\n {%- if content.type == 'image' or 'image' in content or 'image_url' in content %}\n {%- set image_count.value = image_count.value + 1 %}\n {%- if add_vision_id %}Picture {{ image_count.value }}: {% endif -%}\n <|vision_start|><|image_pad|><|vision_end|>\n {%- elif content.type == 'video' or 'video' in content %}\n {%- set video_count.value = video_count.value + 1 %}\n {%- if add_vision_id %}Video {{ video_count.value }}: {% endif -%}\n <|vision_start|><|video_pad|><|vision_end|>\n {%- elif 'text' in content %}\n {{- content.text }}\n {%- endif %}\n {%- endfor %}\n {%- endif %}\n {{- '<|im_end|>\\n' }}\n {%- elif message.role == \"assistant\" %}\n {{- '<|im_start|>' + message.role + '\\n' }}\n {%- if message.content is string %}\n {{- message.content }}\n {%- else %}\n {%- for content_item in message.content %}\n {%- if 'text' in content_item %}\n {{- content_item.text }}\n {%- endif %}\n {%- endfor %}\n {%- endif %}\n {%- if message.tool_calls %}\n {%- for tool_call in message.tool_calls %}\n {%- if (loop.first and message.content) or (not loop.first) %}\n {{- '\\n' }}\n {%- endif %}\n {%- if tool_call.function %}\n {%- set tool_call = tool_call.function %}\n {%- endif %}\n {{- '<tool_call>\\n{\"name\": \"' }}\n {{- tool_call.name }}\n {{- '\", \"arguments\": ' }}\n {%- if tool_call.arguments is string %}\n {{- tool_call.arguments }}\n {%- else %}\n {{- tool_call.arguments | tojson }}\n {%- endif %}\n {{- '}\\n</tool_call>' }}\n {%- endfor %}\n {%- endif %}\n {{- '<|im_end|>\\n' }}\n {%- elif message.role == \"tool\" %}\n {%- if loop.first or (messages[loop.index0 - 1].role != \"tool\") %}\n {{- '<|im_start|>user' }}\n {%- endif %}\n {{- '\\n<tool_response>\\n' }}\n {%- if message.content is string %}\n {{- message.content }}\n {%- else %}\n {%- for content in message.content %}\n {%- if content.type == 'image' or 'image' in content or 'image_url' in content %}\n {%- set image_count.value = image_count.value + 1 %}\n {%- if add_vision_id %}Picture {{ image_count.value }}: {% endif -%}\n <|vision_start|><|image_pad|><|vision_end|>\n {%- elif content.type == 'video' or 'video' in content %}\n {%- set video_count.value = video_count.value + 1 %}\n {%- if add_vision_id %}Video {{ video_count.value }}: {% endif -%}\n <|vision_start|><|video_pad|><|vision_end|>\n {%- elif 'text' in content %}\n {{- content.text }}\n {%- endif %}\n {%- endfor %}\n {%- endif %}\n {{- '\\n</tool_response>' }}\n {%- if loop.last or (messages[loop.index0 + 1].role != \"tool\") %}\n {{- '<|im_end|>\\n' }}\n {%- endif %}\n {%- endif %}\n{%- endfor %}\n{%- if add_generation_prompt %}\n {{- '<|im_start|>assistant\\n' }}\n{%- endif %}\n"

|

| 3 |

+

}

|

config.json

CHANGED

|

@@ -2,13 +2,259 @@

|

|

| 2 |

"architectures": [

|

| 3 |

"Qwen3VLForConditionalGeneration"

|

| 4 |

],

|

| 5 |

-

"torch_dtype": "bfloat16",

|

| 6 |

-

"eos_token_id": 151645,

|

| 7 |

"image_token_id": 151655,

|

| 8 |

"model_type": "qwen3_vl",

|

| 9 |

"pad_token_id": 151654,

|

| 10 |

"quantization_config": {

|

| 11 |

"activation_scheme": "dynamic",

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 12 |

"modules_to_not_convert": [

|

| 13 |

"lm_head",

|

| 14 |

"model.visual"

|

|

@@ -51,7 +297,7 @@

|

|

| 51 |

"vocab_size": 151936

|

| 52 |

},

|

| 53 |

"tie_word_embeddings": false,

|

| 54 |

-

"transformers_version": "4.57.

|

| 55 |

"unsloth_fixed": true,

|

| 56 |

"video_token_id": 151656,

|

| 57 |

"vision_config": {

|

|

@@ -61,7 +307,6 @@

|

|

| 61 |

17

|

| 62 |

],

|

| 63 |

"depth": 24,

|

| 64 |

-

"torch_dtype": "bfloat16",

|

| 65 |

"hidden_act": "gelu_pytorch_tanh",

|

| 66 |

"hidden_size": 1024,

|

| 67 |

"in_channels": 3,

|

|

@@ -77,4 +322,4 @@

|

|

| 77 |

},

|

| 78 |

"vision_end_token_id": 151653,

|

| 79 |

"vision_start_token_id": 151652

|

| 80 |

-

}

|

|

|

|

| 2 |

"architectures": [

|

| 3 |

"Qwen3VLForConditionalGeneration"

|

| 4 |

],

|

|

|

|

|

|

|

| 5 |

"image_token_id": 151655,

|

| 6 |

"model_type": "qwen3_vl",

|

| 7 |

"pad_token_id": 151654,

|

| 8 |

"quantization_config": {

|

| 9 |

"activation_scheme": "dynamic",

|

| 10 |

+

"fmt": "e4m3",

|

| 11 |

+

"ignored_layers": [

|

| 12 |

+

"lm_head",

|

| 13 |

+

"model.visual.merger.linear_fc1",

|

| 14 |

+

"model.visual.merger.linear_fc2",

|

| 15 |

+

"model.visual.merger.norm",

|

| 16 |

+

"model.visual.patch_embed.proj",

|

| 17 |

+

"model.visual.pos_embed",

|

| 18 |

+

"visual.merger.linear_fc1",

|

| 19 |

+

"visual.merger.linear_fc2",

|

| 20 |

+

"visual.merger.norm",

|

| 21 |

+

"visual.patch_embed.proj",

|

| 22 |

+

"visual.pos_embed",

|

| 23 |

+

"model.visual.blocks.0.attn.proj",

|

| 24 |

+

"model.visual.blocks.0.attn.qkv",

|

| 25 |

+

"model.visual.blocks.0.mlp.linear_fc1",

|

| 26 |

+

"model.visual.blocks.0.mlp.linear_fc2",

|

| 27 |

+

"visual.blocks.0.attn.proj",

|

| 28 |

+

"visual.blocks.0.attn.qkv_proj",

|

| 29 |

+

"visual.blocks.0.mlp.linear_fc1",

|

| 30 |

+

"visual.blocks.0.mlp.linear_fc2",

|

| 31 |

+

"model.visual.blocks.1.attn.proj",

|

| 32 |

+

"model.visual.blocks.1.attn.qkv",

|

| 33 |

+

"model.visual.blocks.1.mlp.linear_fc1",

|

| 34 |

+

"model.visual.blocks.1.mlp.linear_fc2",

|

| 35 |

+

"visual.blocks.1.attn.proj",

|

| 36 |

+

"visual.blocks.1.attn.qkv_proj",

|

| 37 |

+

"visual.blocks.1.mlp.linear_fc1",

|

| 38 |

+

"visual.blocks.1.mlp.linear_fc2",

|

| 39 |

+

"model.visual.blocks.2.attn.proj",

|

| 40 |

+

"model.visual.blocks.2.attn.qkv",

|

| 41 |

+

"model.visual.blocks.2.mlp.linear_fc1",

|

| 42 |

+

"model.visual.blocks.2.mlp.linear_fc2",

|

| 43 |

+

"visual.blocks.2.attn.proj",

|

| 44 |

+

"visual.blocks.2.attn.qkv_proj",

|

| 45 |

+

"visual.blocks.2.mlp.linear_fc1",

|

| 46 |

+

"visual.blocks.2.mlp.linear_fc2",

|

| 47 |

+

"model.visual.blocks.3.attn.proj",

|

| 48 |

+

"model.visual.blocks.3.attn.qkv",

|

| 49 |

+

"model.visual.blocks.3.mlp.linear_fc1",

|

| 50 |

+

"model.visual.blocks.3.mlp.linear_fc2",

|

| 51 |

+

"visual.blocks.3.attn.proj",

|

| 52 |

+

"visual.blocks.3.attn.qkv_proj",

|

| 53 |

+

"visual.blocks.3.mlp.linear_fc1",

|

| 54 |

+

"visual.blocks.3.mlp.linear_fc2",

|

| 55 |

+

"model.visual.blocks.4.attn.proj",

|

| 56 |

+

"model.visual.blocks.4.attn.qkv",

|

| 57 |

+

"model.visual.blocks.4.mlp.linear_fc1",

|

| 58 |

+

"model.visual.blocks.4.mlp.linear_fc2",

|

| 59 |

+

"visual.blocks.4.attn.proj",

|

| 60 |

+

"visual.blocks.4.attn.qkv_proj",

|

| 61 |

+

"visual.blocks.4.mlp.linear_fc1",

|

| 62 |

+

"visual.blocks.4.mlp.linear_fc2",

|

| 63 |

+

"model.visual.blocks.5.attn.proj",

|

| 64 |

+

"model.visual.blocks.5.attn.qkv",

|

| 65 |

+

"model.visual.blocks.5.mlp.linear_fc1",

|

| 66 |

+

"model.visual.blocks.5.mlp.linear_fc2",

|

| 67 |

+

"visual.blocks.5.attn.proj",

|

| 68 |

+

"visual.blocks.5.attn.qkv_proj",

|

| 69 |

+

"visual.blocks.5.mlp.linear_fc1",

|

| 70 |

+

"visual.blocks.5.mlp.linear_fc2",

|

| 71 |

+

"model.visual.blocks.6.attn.proj",

|

| 72 |

+

"model.visual.blocks.6.attn.qkv",

|

| 73 |

+

"model.visual.blocks.6.mlp.linear_fc1",

|

| 74 |

+

"model.visual.blocks.6.mlp.linear_fc2",

|

| 75 |

+

"visual.blocks.6.attn.proj",

|

| 76 |

+

"visual.blocks.6.attn.qkv_proj",

|

| 77 |

+

"visual.blocks.6.mlp.linear_fc1",

|

| 78 |

+

"visual.blocks.6.mlp.linear_fc2",

|

| 79 |

+

"model.visual.blocks.7.attn.proj",

|

| 80 |

+

"model.visual.blocks.7.attn.qkv",

|

| 81 |

+

"model.visual.blocks.7.mlp.linear_fc1",

|

| 82 |

+

"model.visual.blocks.7.mlp.linear_fc2",

|

| 83 |

+

"visual.blocks.7.attn.proj",

|

| 84 |

+

"visual.blocks.7.attn.qkv_proj",

|

| 85 |

+

"visual.blocks.7.mlp.linear_fc1",

|

| 86 |

+

"visual.blocks.7.mlp.linear_fc2",

|

| 87 |

+

"model.visual.blocks.8.attn.proj",

|

| 88 |

+

"model.visual.blocks.8.attn.qkv",

|

| 89 |

+

"model.visual.blocks.8.mlp.linear_fc1",

|

| 90 |

+

"model.visual.blocks.8.mlp.linear_fc2",

|

| 91 |

+

"visual.blocks.8.attn.proj",

|

| 92 |

+

"visual.blocks.8.attn.qkv_proj",

|

| 93 |

+

"visual.blocks.8.mlp.linear_fc1",

|

| 94 |

+

"visual.blocks.8.mlp.linear_fc2",

|

| 95 |

+

"model.visual.blocks.9.attn.proj",

|

| 96 |

+

"model.visual.blocks.9.attn.qkv",

|

| 97 |

+

"model.visual.blocks.9.mlp.linear_fc1",

|

| 98 |

+

"model.visual.blocks.9.mlp.linear_fc2",

|

| 99 |

+

"visual.blocks.9.attn.proj",

|

| 100 |

+

"visual.blocks.9.attn.qkv_proj",

|

| 101 |

+

"visual.blocks.9.mlp.linear_fc1",

|

| 102 |

+

"visual.blocks.9.mlp.linear_fc2",

|

| 103 |

+

"model.visual.blocks.10.attn.proj",

|

| 104 |

+

"model.visual.blocks.10.attn.qkv",

|

| 105 |

+

"model.visual.blocks.10.mlp.linear_fc1",

|

| 106 |

+

"model.visual.blocks.10.mlp.linear_fc2",

|

| 107 |

+

"visual.blocks.10.attn.proj",

|

| 108 |

+

"visual.blocks.10.attn.qkv_proj",

|

| 109 |

+

"visual.blocks.10.mlp.linear_fc1",

|

| 110 |

+

"visual.blocks.10.mlp.linear_fc2",

|

| 111 |

+

"model.visual.blocks.11.attn.proj",

|

| 112 |

+

"model.visual.blocks.11.attn.qkv",

|

| 113 |

+

"model.visual.blocks.11.mlp.linear_fc1",

|

| 114 |

+

"model.visual.blocks.11.mlp.linear_fc2",

|

| 115 |

+

"visual.blocks.11.attn.proj",

|

| 116 |

+

"visual.blocks.11.attn.qkv_proj",

|

| 117 |

+

"visual.blocks.11.mlp.linear_fc1",

|

| 118 |

+

"visual.blocks.11.mlp.linear_fc2",

|

| 119 |

+

"model.visual.blocks.12.attn.proj",

|

| 120 |

+

"model.visual.blocks.12.attn.qkv",

|

| 121 |

+

"model.visual.blocks.12.mlp.linear_fc1",

|

| 122 |

+

"model.visual.blocks.12.mlp.linear_fc2",

|

| 123 |

+

"visual.blocks.12.attn.proj",

|

| 124 |

+

"visual.blocks.12.attn.qkv_proj",

|

| 125 |

+

"visual.blocks.12.mlp.linear_fc1",

|

| 126 |

+

"visual.blocks.12.mlp.linear_fc2",

|

| 127 |

+

"model.visual.blocks.13.attn.proj",

|

| 128 |

+

"model.visual.blocks.13.attn.qkv",

|

| 129 |

+

"model.visual.blocks.13.mlp.linear_fc1",

|

| 130 |

+

"model.visual.blocks.13.mlp.linear_fc2",

|

| 131 |

+

"visual.blocks.13.attn.proj",

|

| 132 |

+

"visual.blocks.13.attn.qkv_proj",

|

| 133 |

+

"visual.blocks.13.mlp.linear_fc1",

|

| 134 |

+

"visual.blocks.13.mlp.linear_fc2",

|

| 135 |

+

"model.visual.blocks.14.attn.proj",

|

| 136 |

+

"model.visual.blocks.14.attn.qkv",

|

| 137 |

+

"model.visual.blocks.14.mlp.linear_fc1",

|

| 138 |

+

"model.visual.blocks.14.mlp.linear_fc2",

|

| 139 |

+

"visual.blocks.14.attn.proj",

|

| 140 |

+

"visual.blocks.14.attn.qkv_proj",

|

| 141 |

+

"visual.blocks.14.mlp.linear_fc1",

|

| 142 |

+

"visual.blocks.14.mlp.linear_fc2",

|

| 143 |

+

"model.visual.blocks.15.attn.proj",

|

| 144 |

+

"model.visual.blocks.15.attn.qkv",

|

| 145 |

+

"model.visual.blocks.15.mlp.linear_fc1",

|

| 146 |

+

"model.visual.blocks.15.mlp.linear_fc2",

|

| 147 |

+

"visual.blocks.15.attn.proj",

|

| 148 |

+

"visual.blocks.15.attn.qkv_proj",

|

| 149 |

+

"visual.blocks.15.mlp.linear_fc1",

|

| 150 |

+

"visual.blocks.15.mlp.linear_fc2",

|

| 151 |

+

"model.visual.blocks.16.attn.proj",

|

| 152 |

+

"model.visual.blocks.16.attn.qkv",

|

| 153 |

+

"model.visual.blocks.16.mlp.linear_fc1",

|

| 154 |

+

"model.visual.blocks.16.mlp.linear_fc2",

|

| 155 |

+

"visual.blocks.16.attn.proj",

|

| 156 |

+

"visual.blocks.16.attn.qkv_proj",

|

| 157 |

+

"visual.blocks.16.mlp.linear_fc1",

|

| 158 |

+

"visual.blocks.16.mlp.linear_fc2",

|

| 159 |

+

"model.visual.blocks.17.attn.proj",

|

| 160 |

+

"model.visual.blocks.17.attn.qkv",

|

| 161 |

+

"model.visual.blocks.17.mlp.linear_fc1",

|

| 162 |

+

"model.visual.blocks.17.mlp.linear_fc2",

|

| 163 |

+

"visual.blocks.17.attn.proj",

|

| 164 |

+

"visual.blocks.17.attn.qkv_proj",

|

| 165 |

+

"visual.blocks.17.mlp.linear_fc1",

|

| 166 |

+

"visual.blocks.17.mlp.linear_fc2",

|

| 167 |

+

"model.visual.blocks.18.attn.proj",

|

| 168 |

+

"model.visual.blocks.18.attn.qkv",

|

| 169 |

+

"model.visual.blocks.18.mlp.linear_fc1",

|

| 170 |

+

"model.visual.blocks.18.mlp.linear_fc2",

|

| 171 |

+

"visual.blocks.18.attn.proj",

|

| 172 |

+

"visual.blocks.18.attn.qkv_proj",

|

| 173 |

+

"visual.blocks.18.mlp.linear_fc1",

|

| 174 |

+

"visual.blocks.18.mlp.linear_fc2",

|

| 175 |

+

"model.visual.blocks.19.attn.proj",

|

| 176 |

+

"model.visual.blocks.19.attn.qkv",

|

| 177 |

+

"model.visual.blocks.19.mlp.linear_fc1",

|

| 178 |

+

"model.visual.blocks.19.mlp.linear_fc2",

|

| 179 |

+

"visual.blocks.19.attn.proj",

|

| 180 |

+

"visual.blocks.19.attn.qkv_proj",

|

| 181 |

+

"visual.blocks.19.mlp.linear_fc1",

|

| 182 |

+

"visual.blocks.19.mlp.linear_fc2",

|

| 183 |

+

"model.visual.blocks.20.attn.proj",

|

| 184 |

+

"model.visual.blocks.20.attn.qkv",

|

| 185 |

+

"model.visual.blocks.20.mlp.linear_fc1",

|

| 186 |

+

"model.visual.blocks.20.mlp.linear_fc2",

|

| 187 |

+

"visual.blocks.20.attn.proj",

|

| 188 |

+

"visual.blocks.20.attn.qkv_proj",

|

| 189 |

+

"visual.blocks.20.mlp.linear_fc1",

|

| 190 |

+

"visual.blocks.20.mlp.linear_fc2",

|

| 191 |

+

"model.visual.blocks.21.attn.proj",

|

| 192 |

+

"model.visual.blocks.21.attn.qkv",

|

| 193 |

+

"model.visual.blocks.21.mlp.linear_fc1",

|

| 194 |

+

"model.visual.blocks.21.mlp.linear_fc2",

|

| 195 |

+

"visual.blocks.21.attn.proj",

|

| 196 |

+

"visual.blocks.21.attn.qkv_proj",

|

| 197 |

+

"visual.blocks.21.mlp.linear_fc1",

|

| 198 |

+

"visual.blocks.21.mlp.linear_fc2",

|

| 199 |

+

"model.visual.blocks.22.attn.proj",

|

| 200 |

+

"model.visual.blocks.22.attn.qkv",

|

| 201 |

+

"model.visual.blocks.22.mlp.linear_fc1",

|

| 202 |

+

"model.visual.blocks.22.mlp.linear_fc2",

|

| 203 |

+

"visual.blocks.22.attn.proj",

|

| 204 |

+

"visual.blocks.22.attn.qkv_proj",

|

| 205 |

+

"visual.blocks.22.mlp.linear_fc1",

|

| 206 |

+

"visual.blocks.22.mlp.linear_fc2",

|

| 207 |

+

"model.visual.blocks.23.attn.proj",

|

| 208 |

+

"model.visual.blocks.23.attn.qkv",

|

| 209 |

+

"model.visual.blocks.23.mlp.linear_fc1",

|

| 210 |

+

"model.visual.blocks.23.mlp.linear_fc2",

|

| 211 |

+

"visual.blocks.23.attn.proj",

|

| 212 |

+

"visual.blocks.23.attn.qkv_proj",

|

| 213 |

+

"visual.blocks.23.mlp.linear_fc1",

|

| 214 |

+

"visual.blocks.23.mlp.linear_fc2",

|

| 215 |

+

"model.visual.blocks.24.attn.proj",

|

| 216 |

+

"model.visual.blocks.24.attn.qkv",

|

| 217 |

+

"model.visual.blocks.24.mlp.linear_fc1",

|

| 218 |

+

"model.visual.blocks.24.mlp.linear_fc2",

|

| 219 |

+

"visual.blocks.24.attn.proj",

|

| 220 |

+

"visual.blocks.24.attn.qkv_proj",

|

| 221 |

+

"visual.blocks.24.mlp.linear_fc1",

|

| 222 |

+

"visual.blocks.24.mlp.linear_fc2",

|

| 223 |

+

"model.visual.blocks.25.attn.proj",

|

| 224 |

+

"model.visual.blocks.25.attn.qkv",

|

| 225 |

+

"model.visual.blocks.25.mlp.linear_fc1",

|

| 226 |

+

"model.visual.blocks.25.mlp.linear_fc2",

|

| 227 |

+

"visual.blocks.25.attn.proj",

|

| 228 |

+

"visual.blocks.25.attn.qkv_proj",

|

| 229 |

+

"visual.blocks.25.mlp.linear_fc1",

|

| 230 |

+

"visual.blocks.25.mlp.linear_fc2",

|

| 231 |

+

"model.visual.blocks.26.attn.proj",

|

| 232 |

+

"model.visual.blocks.26.attn.qkv",

|

| 233 |

+

"model.visual.blocks.26.mlp.linear_fc1",

|

| 234 |

+

"model.visual.blocks.26.mlp.linear_fc2",

|

| 235 |

+

"visual.blocks.26.attn.proj",

|

| 236 |

+

"visual.blocks.26.attn.qkv_proj",

|

| 237 |

+

"visual.blocks.26.mlp.linear_fc1",

|

| 238 |

+

"visual.blocks.26.mlp.linear_fc2",

|

| 239 |

+

"model.visual.deepstack_merger_list.0.linear_fc1",

|

| 240 |

+

"model.visual.deepstack_merger_list.0.linear_fc2",

|

| 241 |

+

"model.visual.deepstack_merger_list.0.norm",

|

| 242 |

+

"visual.deepstack_merger_list.0.linear_fc1",

|

| 243 |

+

"visual.deepstack_merger_list.0.linear_fc2",

|

| 244 |

+

"visual.deepstack_merger_list.0.norm",

|

| 245 |

+

"model.visual.deepstack_merger_list.1.linear_fc1",

|

| 246 |

+

"model.visual.deepstack_merger_list.1.linear_fc2",

|

| 247 |

+

"model.visual.deepstack_merger_list.1.norm",

|

| 248 |

+

"visual.deepstack_merger_list.1.linear_fc1",

|

| 249 |

+

"visual.deepstack_merger_list.1.linear_fc2",

|

| 250 |

+

"visual.deepstack_merger_list.1.norm",

|

| 251 |

+

"model.visual.deepstack_merger_list.2.linear_fc1",

|

| 252 |

+

"model.visual.deepstack_merger_list.2.linear_fc2",

|

| 253 |

+

"model.visual.deepstack_merger_list.2.norm",

|

| 254 |

+

"visual.deepstack_merger_list.2.linear_fc1",

|

| 255 |

+

"visual.deepstack_merger_list.2.linear_fc2",

|

| 256 |

+

"visual.deepstack_merger_list.2.norm"

|

| 257 |

+

],

|

| 258 |

"modules_to_not_convert": [

|

| 259 |

"lm_head",

|

| 260 |

"model.visual"

|

|

|

|

| 297 |

"vocab_size": 151936

|

| 298 |

},

|

| 299 |

"tie_word_embeddings": false,

|

| 300 |

+

"transformers_version": "4.57.1",

|

| 301 |

"unsloth_fixed": true,

|

| 302 |

"video_token_id": 151656,

|

| 303 |

"vision_config": {

|

|

|

|

| 307 |

17

|

| 308 |

],

|

| 309 |

"depth": 24,

|

|

|

|

| 310 |

"hidden_act": "gelu_pytorch_tanh",

|

| 311 |

"hidden_size": 1024,

|

| 312 |

"in_channels": 3,

|

|

|

|

| 322 |

},

|

| 323 |

"vision_end_token_id": 151653,

|

| 324 |

"vision_start_token_id": 151652

|

| 325 |

+

}

|

generation_config.json

CHANGED

|

@@ -1,13 +1,14 @@

|

|

| 1 |

{

|

| 2 |

"bos_token_id": 151643,

|

|

|

|

| 3 |

"do_sample": true,

|

| 4 |

"eos_token_id": [

|

| 5 |

151645,

|

| 6 |

151643

|

| 7 |

],

|

| 8 |

-

"pad_token_id": 151654,

|

| 9 |

-

"temperature": 0.7,

|

| 10 |

"top_k": 20,

|

| 11 |

"top_p": 0.8,

|

| 12 |

-

"

|

| 13 |

-

|

|

|

|

|

|

|

|

|

| 1 |

{

|

| 2 |

"bos_token_id": 151643,

|

| 3 |

+

"pad_token_id": 151643,

|

| 4 |

"do_sample": true,

|

| 5 |

"eos_token_id": [

|

| 6 |

151645,

|

| 7 |

151643

|

| 8 |

],

|

|

|

|

|

|

|

| 9 |

"top_k": 20,

|

| 10 |

"top_p": 0.8,

|

| 11 |

+

"repetition_penalty": 1.0,

|

| 12 |

+

"temperature": 0.7,

|

| 13 |

+

"transformers_version": "4.56.0"

|

| 14 |

+

}

|

model-00001-of-00002.safetensors

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:39c3e38447b0fe8b94c95a01a6b3f1171f1db2a4a6e03ccf55e3b6c0c5f07a44

|

| 3 |

+

size 5366863440

|

model-00002-of-00002.safetensors

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ece22ccb44f97514a08b5bf10513530e0928f2e9bc93c7dc0efe8e18796fe4d9

|

| 3 |

+

size 654372016

|

model.safetensors.index.json

CHANGED

|

The diff for this file is too large to render.

See raw diff

|

|

|